From Gaza to Iran, AI is applied increasingly.

From Gaza to Iran, AI is applied increasingly.

By Mohammad Reza Moradi, General Director of Mehr News Agency’s Foreign Languages and International News Department, from Tehran / Iran

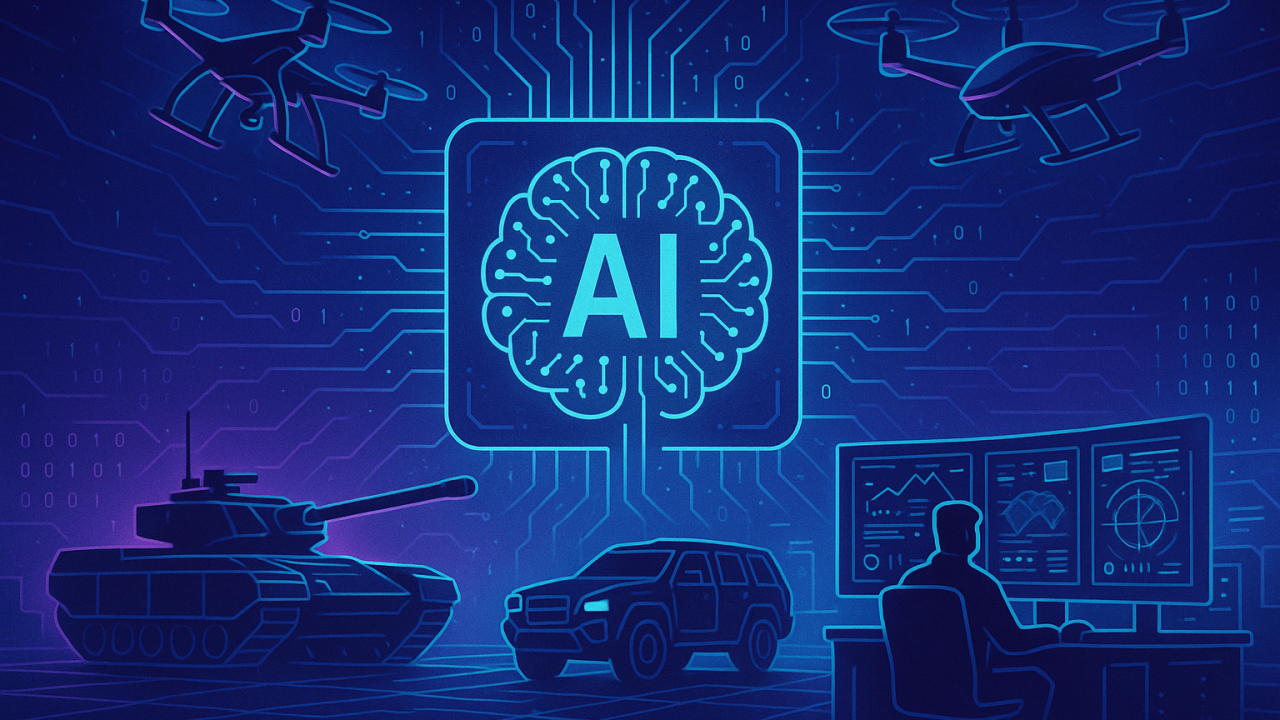

Recent military developments indicate that warfare has entered a new phase—one in which the “speed of data processing” is as decisive as “firepower.” In the past, military superiority was measured by troop numbers, equipment, or geographical control. Today, however, the ability to analyze vast amounts of data and convert it into operational decisions within fractions of a second has become a key determinant of advantage. In this context, artificial intelligence is no longer merely a supporting tool but has emerged as one of the central pillars in the design and execution of military operations.

The war in Gaza, and particularly the recent forty-day war against Iran, represent two significant examples of this historical transition. In both cases, the use of AI-based systems for target identification, prioritization of strikes, and even recommending types of weaponry has reached an unprecedented level. At the center of this transformation, one company stands out more than others: Palantir Technologies—a firm deeply intertwined with the U.S. security and military establishment, now playing a key role in what can be described as “algorithmic warfare.”

Palantir: The Architecture of Data-Driven Warfare

Palantir was founded in 2003 by figures including Peter Thiel, and from the outset, it developed close ties with institutions such as the Central Intelligence Agency. Through platforms like Gotham and Foundry, the company became one of the most important providers of data analytics technology for U.S. intelligence and defense agencies.

At its core, Palantir’s work is based on a simple yet powerful idea: transforming massive volumes of fragmented data into a coherent operational picture for decision-making. These data streams can include satellite imagery, drone feeds, signals intelligence, human intelligence, and even social media data. What Palantir offers goes beyond analysis; it provides a “digital command system” capable of recommending targets, prioritizing attacks, and even assessing potential outcomes.

One of its most significant military products is the Maven Smart System, developed within the Pentagon’s Project Maven. This system uses machine learning algorithms to process battlefield data at scale and extract potential targets. Reports from U.S. sources indicate that it can perform tasks that previously took hours or days within seconds.

Palantir’s close collaboration with the United States Department of Defense, along with multi-billion-dollar contracts, highlights its strategic importance in the evolving U.S. military doctrine. In effect, Palantir can be seen as part of a “digital kill chain”—a system that begins with data collection and ends with the execution of strikes.

At the same time, critics argue that such reliance on algorithms increases the distance between decision-makers and the human consequences of war. When commanders rely on AI-generated outputs instead of direct analysis, the risk of overdependence on machines and reduced human judgment becomes significant—an issue repeatedly raised by international law experts and UN bodies in recent years.

Artificial Intelligence and the War in Gaza

The war in Gaza can be viewed as one of the first real-world laboratories for the large-scale application of AI in military operations. Reports from credible international media indicate that the Israeli military has used AI-based systems to identify targets and design strikes.

Within this framework, algorithms analyze diverse datasets to generate lists of potential targets—ranging from individuals to buildings and infrastructure. These lists are then passed to analysts and commanders for final decisions. However, the speed and scale of this process have drastically reduced the time available for human review.

Some reports suggest that, in certain cases, “acceptable error margins” in these systems have effectively incorporated civilian casualties into operational calculations. This has sparked serious legal and ethical debates about the nature of AI-driven warfare.

In such a context, the role of companies like Palantir Technologies becomes increasingly significant. Although the exact details of Palantir’s cooperation with the Israeli military are not fully public, available evidence suggests that technologies similar to those used in Project Maven have played a role in data analysis and operational decision support.

A key observation from the Gaza war is that AI has not necessarily increased precision; rather, in some cases, it has expanded the scale of attacks. In other words, what has changed is not merely “how wars are fought,” but the “speed and volume of warfare”—a shift with profound human consequences.

Artificial Intelligence and the Forty-Day War Against Iran

The recent forty-day war against Iran can be regarded as the first serious instance of “large-scale AI-enabled warfare,” where data-driven systems played a central role not only in analysis but across the entire kill chain. At the center of this process was the Maven Smart System, developed by Palantir Technologies, which effectively became the backbone of operations.

According to reports, in the first 24 hours of the operation—referred to as “Epic Fury”—more than 1,000 targets inside Iran were struck. Such a figure would be nearly unimaginable in conventional warfare. This scale was made possible not by a sudden increase in firepower, but by the intense compression of decision-making processes through AI systems.

Palantir’s role in this context was concrete and operational. The Maven system integrates disparate data streams—from satellite imagery and drone footage to radar inputs, signals intelligence, and human intelligence—into a unified platform. Using computer vision and pattern recognition algorithms, it converts these data into “target packages”: actionable sets of information that include coordinates, target type, threat level, and recommended engagement methods.

In one Pentagon demonstration, the system was shown to identify a specific vehicle within a parking lot from vast datasets and immediately suggest an appropriate strike option. This illustrates how the gap between “target detection” and “decision to strike” has been reduced to a matter of a few clicks.

More importantly, Maven has not only increased speed but also transformed the scale of warfare. Reports indicate that it has expanded targeting capacity from fewer than 100 targets per day to thousands, with the ability in some cases to identify hundreds of targets per hour. For this reason, the Iran war is considered one of the first real-world cases of “mass target generation by artificial intelligence.”

However, this capability has also led to significant risks. One of the most controversial incidents was the strike on a school in Minab, southern Iran. Investigations suggested that the location had remained classified in U.S. intelligence databases as a “military target,” despite having been converted into an educational facility years earlier. The Maven system processed this outdated data, validated it as a legitimate target, and due to the speed of the decision-making cycle, there was insufficient time for human verification.

This example illustrates that the issue is not merely an “algorithmic error,” but rather the interaction of three factors: incomplete or outdated data, excessive reliance on system outputs, and pressure for rapid decision-making. In essence, the very feature that makes such systems powerful—speed—also becomes their critical vulnerability.

At the strategic level, what occurred in this war represents more than the use of a new tool; it reflects a transformation in the nature of military power itself. By converting data into decisions, Palantir has effectively become part of the command structure of warfare. As some analysts suggest, the company is no longer just a technology contractor, but an “invisible architect of the battlefield.”

Ultimately, the forty-day war against Iran demonstrates that the future of warfare will be determined not by the number of tanks or fighter jets, but by the ability to generate, process, and operationalize data in real time—where algorithms may act faster than humans, though not necessarily more accurately or responsibly.

Conclusion

What has been observed in Gaza and the forty-day war against Iran is not merely the adoption of a new technology, but the emergence of a new paradigm in warfare—one in which algorithms play an increasing role in decisions of life and death.

Artificial intelligence can enhance precision, speed, and efficiency, but it also introduces serious risks: reduced human oversight, increased potential for error, and the transformation of warfare into a “mechanized process” where human accountability is diminished.

As a symbol of this transformation, Palantir Technologies illustrates how the convergence of technology and military power can produce tools that blur the line between data analysis and the execution of violence.

Ultimately, the central question is not about what artificial intelligence can do, but how it is used. Without the development of appropriate legal and ethical frameworks, what is today presented as “technological superiority” may become a driver of unprecedented global violence.

Leave a Reply